Demo 02: Medallion Architecture Bronze → Silver → Gold Data Pipeline

Platform: Trino + Iceberg + Delta Lake + MinIO (S3-compatible)

Dataset: NYC FHV High-Volume Trip Data (2026-01)

Goal: Implement a production-style medallion architecture that progressively refines raw data into analytics-ready Gold tables, comparing Iceberg vs. Delta at each layer.

1. Context & Learning Objectives

In Experiment 01 you established the ingestion foundation — raw Parquet files landed in MinIO, then registered as a Hive external table and ingested into both an Iceberg Bronze table and a Delta Bronze table with platform-level metadata columns (_ingest_timestamp, _source_prefix, _ingestion_id).

Experiment 02 builds the transformation pipeline on top of that foundation. By the end you will understand:

- Why each medallion layer has a different schema contract and quality guarantee

- How to implement idempotent

MERGE(upsert) logic in both Iceberg and Delta via Trino - How partition pruning, hidden partitioning (Iceberg) vs. explicit partitioning (Delta) affect query performance

- How to implement Silver-layer data quality checks as SQL assertions before promoting data to Gold

- How to design a Gold aggregation table that a BI tool or ML feature store could consume directly

- The operational difference between Iceberg's snapshot isolation and Delta's transaction log when doing incremental loads

2. Medallion Layer Definitions (this project)

| Layer | Location | Guarantee | Schema evolution | Partition |

|---|---|---|---|---|

| Bronze | s3a://demo/bronze/ |

Raw copy + metadata; no deduplication | Append-only | day(pickup_datetime) |

| Silver | s3a://demo/silver/ |

Deduplicated, validated, typed, enriched | Evolving (add columns OK) | day(pickup_datetime) |

| Gold | s3a://demo/gold/ |

Business aggregates, SLA-grade quality | Stable (breaking changes → new table) | pickup_date (DATE) |

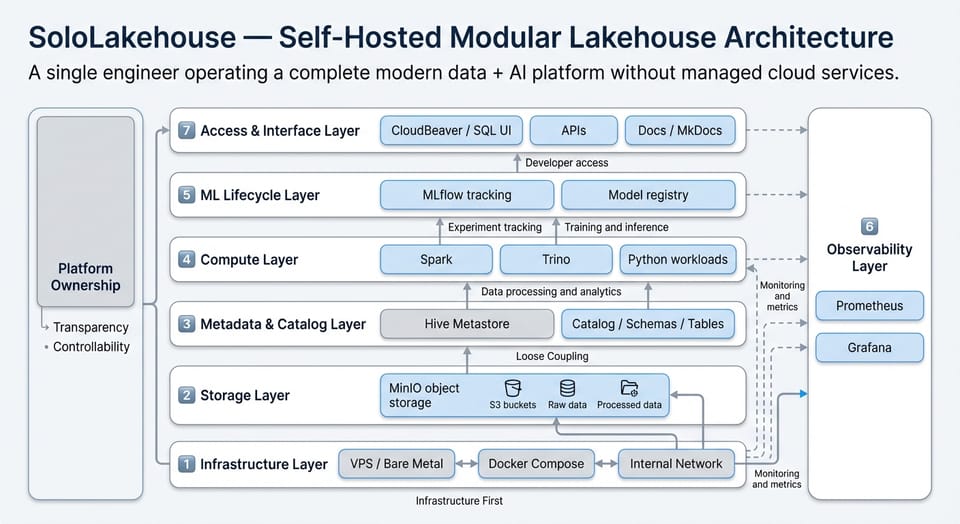

3. Architecture Diagram

MinIO (s3a://demo/)

│

├── landing/nyc_taxi/2026/02/26/ ← Parquet files (immutable, raw)

│

├── bronze/

│ ├── nyc_fhvhv_iceberg/ ← iceberg.bronze.fhvhv_tripdata_raw

│ └── nyc_fhvhv_delta/ ← delta.bronze.fhvhv_tripdata_raw_delta

│

├── silver/

│ ├── nyc_fhvhv_iceberg/ ← iceberg.silver.fhvhv_tripdata_clean

│ └── nyc_fhvhv_delta/ ← delta.silver.fhvhv_tripdata_clean_delta

│

└── gold/

├── nyc_fhvhv_iceberg/ ← iceberg.gold.fhvhv_daily_summary

└── nyc_fhvhv_delta/ ← delta.gold.fhvhv_daily_summary_delta

4. Experiment Steps

Step 0 — Verify Bronze Layer (Pre-flight)

Before building Silver, confirm the Bronze tables from Experiment 01 are populated and healthy.

-- Check Iceberg Bronze row count and partition distribution

SELECT

day(pickup_datetime) AS pickup_day,

COUNT(*) AS row_count,

COUNT(DISTINCT _ingestion_id) AS unique_rows,

MIN(_ingest_timestamp) AS first_ingested,

MAX(_ingest_timestamp) AS last_ingested

FROM iceberg.bronze.fhvhv_tripdata_raw

GROUP BY day(pickup_datetime)

ORDER BY pickup_day;

-- Quick sanity check: any NULLs in key columns?

SELECT

COUNT_IF(pickup_datetime IS NULL) AS null_pickup,

COUNT_IF(dropoff_datetime IS NULL) AS null_dropoff,

COUNT_IF(trip_miles <= 0) AS non_positive_miles,

COUNT_IF(base_passenger_fare < 0) AS negative_fare

FROM iceberg.bronze.fhvhv_tripdata_raw;

Expected outcome: You should see rows distributed across multiple days within the partition. Note down the total row count — you will compare it to Silver after deduplication.

Step 1 — Create Silver Schema and Tables

Silver applies three transformations on top of Bronze: (1) deduplication using _ingestion_id, (2) validation filtering out rows that violate business rules, and (3) enrichment with computed columns like trip_duration_minutes and fare_per_mile.

1a — Iceberg Silver Table

CREATE SCHEMA IF NOT EXISTS iceberg.silver;

DROP TABLE IF EXISTS iceberg.silver.fhvhv_tripdata_clean;

CREATE TABLE iceberg.silver.fhvhv_tripdata_clean (

-- business key (natural dedup key)

trip_id VARCHAR, -- SHA256 of (license+pickup_dt+dropoff_dt+pu+do)

-- core trip fields

hvfhs_license_num VARCHAR,

dispatching_base_num VARCHAR,

originating_base_num VARCHAR,

request_datetime TIMESTAMP(6),

on_scene_datetime TIMESTAMP(6),

pickup_datetime TIMESTAMP(6),

dropoff_datetime TIMESTAMP(6),

PULocationID INTEGER,

DOLocationID INTEGER,

-- raw metrics

trip_miles DOUBLE,

trip_time BIGINT,

base_passenger_fare DOUBLE,

tolls DOUBLE,

bcf DOUBLE,

sales_tax DOUBLE,

congestion_surcharge DOUBLE,

airport_fee DOUBLE,

tips DOUBLE,

driver_pay DOUBLE,

cbd_congestion_fee DOUBLE,

-- boolean flags (converted from VARCHAR 'Y'/'N')

is_shared_request BOOLEAN,

is_shared_match BOOLEAN,

is_access_a_ride BOOLEAN,

is_wav_request BOOLEAN,

is_wav_match BOOLEAN,

-- Silver enrichment columns

trip_duration_minutes DOUBLE, -- derived: (dropoff - pickup) in minutes

fare_per_mile DOUBLE, -- derived: base_passenger_fare / trip_miles

total_amount DOUBLE, -- derived: sum of all fare components

-- data quality metadata

dq_passed BOOLEAN, -- did this row pass all Silver DQ rules?

dq_flags VARCHAR, -- pipe-delimited list of failed rules (or NULL)

-- lineage

_bronze_ingestion_id VARCHAR, -- FK back to Bronze _ingestion_id

_silver_timestamp TIMESTAMP(6) -- when Silver processing ran

)

WITH (

format = 'PARQUET',

location = 's3a://demo/silver/nyc_fhvhv_iceberg/',

partitioning = ARRAY['day(pickup_datetime)']

);

1b — Delta Silver Table

CREATE SCHEMA IF NOT EXISTS delta.silver

WITH (location = 's3a://demo/silver/_schemas/silver/');

DROP TABLE IF EXISTS delta.silver.fhvhv_tripdata_clean_delta;

CREATE TABLE delta.silver.fhvhv_tripdata_clean_delta (

trip_id VARCHAR,

hvfhs_license_num VARCHAR,

dispatching_base_num VARCHAR,

originating_base_num VARCHAR,

request_datetime TIMESTAMP(6),

on_scene_datetime TIMESTAMP(6),

pickup_datetime TIMESTAMP(6),

dropoff_datetime TIMESTAMP(6),

PULocationID INTEGER,

DOLocationID INTEGER,

trip_miles DOUBLE,

trip_time BIGINT,

base_passenger_fare DOUBLE,

tolls DOUBLE,

bcf DOUBLE,

sales_tax DOUBLE,

congestion_surcharge DOUBLE,

airport_fee DOUBLE,

tips DOUBLE,

driver_pay DOUBLE,

cbd_congestion_fee DOUBLE,

is_shared_request BOOLEAN,

is_shared_match BOOLEAN,

is_access_a_ride BOOLEAN,

is_wav_request BOOLEAN,

is_wav_match BOOLEAN,

trip_duration_minutes DOUBLE,

fare_per_mile DOUBLE,

total_amount DOUBLE,

dq_passed BOOLEAN,

dq_flags VARCHAR,

_bronze_ingestion_id VARCHAR,

_silver_timestamp TIMESTAMP(6)

)

WITH (

location = 's3a://demo/silver/nyc_fhvhv_delta/'

);

Step 2 — Populate Silver via Bronze → Silver Transformation

This INSERT encapsulates the Bronze-to-Silver logic. Notice the CTE pattern: it is intentional. Breaking the transformation into named CTEs makes the logic readable, testable, and straightforward to convert into a dbt model or Airflow SQL task later.

-- ============================================================

-- Bronze → Silver: Iceberg version

-- Run this after Step 1a.

-- ============================================================

INSERT INTO iceberg.silver.fhvhv_tripdata_clean

WITH

-- CTE 1: Deduplicate Bronze on natural business key.

-- If the same trip was ingested twice (e.g. re-run without truncate),

-- keep only the FIRST occurrence by _ingest_timestamp.

deduped AS (

SELECT *,

ROW_NUMBER() OVER (

PARTITION BY hvfhs_license_num,

cast(pickup_datetime AS VARCHAR),

cast(dropoff_datetime AS VARCHAR),

cast(PULocationID AS VARCHAR),

cast(DOLocationID AS VARCHAR)

ORDER BY _ingest_timestamp ASC

) AS rn

FROM iceberg.bronze.fhvhv_tripdata_raw

),

-- CTE 2: Retain only the first copy of each trip.

unique_trips AS (

SELECT * FROM deduped WHERE rn = 1

),

-- CTE 3: Compute derived columns and convert flags.

enriched AS (

SELECT

-- trip_id: deterministic hash as the Silver business key

to_hex(md5(to_utf8(

hvfhs_license_num ||

cast(pickup_datetime AS VARCHAR) ||

cast(dropoff_datetime AS VARCHAR) ||

cast(PULocationID AS VARCHAR) ||

cast(DOLocationID AS VARCHAR)

))) AS trip_id,

hvfhs_license_num,

dispatching_base_num,

originating_base_num,

request_datetime,

on_scene_datetime,

pickup_datetime,

dropoff_datetime,

PULocationID,

DOLocationID,

trip_miles,

trip_time,

base_passenger_fare,

tolls,

bcf,

sales_tax,

congestion_surcharge,

airport_fee,

tips,

driver_pay,

cbd_congestion_fee,

-- flag conversion: 'Y' → TRUE, anything else → FALSE

(shared_request_flag = 'Y') AS is_shared_request,

(shared_match_flag = 'Y') AS is_shared_match,

(access_a_ride_flag = 'Y') AS is_access_a_ride,

(wav_request_flag = 'Y') AS is_wav_request,

(wav_match_flag = 'Y') AS is_wav_match,

-- derived metrics

date_diff('second', pickup_datetime, dropoff_datetime) / 60.0

AS trip_duration_minutes,

CASE WHEN trip_miles > 0

THEN base_passenger_fare / trip_miles

ELSE NULL

END AS fare_per_mile,

COALESCE(base_passenger_fare, 0)

+ COALESCE(tolls, 0)

+ COALESCE(bcf, 0)

+ COALESCE(sales_tax, 0)

+ COALESCE(congestion_surcharge, 0)

+ COALESCE(airport_fee, 0)

+ COALESCE(tips, 0)

+ COALESCE(cbd_congestion_fee, 0) AS total_amount,

_ingestion_id AS _bronze_ingestion_id,

current_timestamp AS _silver_timestamp

FROM unique_trips

),

-- CTE 4: Apply data quality rules and annotate each row.

-- This pattern (annotate rather than filter) is deliberate:

-- you keep DQ-failed rows visible in Silver so you can audit them,

-- but Gold will filter to dq_passed = TRUE only.

dq_annotated AS (

SELECT

*,

-- Evaluate all DQ rules; collect failures into a pipe-delimited string

ARRAY_JOIN(

FILTER(

ARRAY[

CASE WHEN pickup_datetime IS NULL THEN 'NULL_PICKUP_DT' END,

CASE WHEN dropoff_datetime IS NULL THEN 'NULL_DROPOFF_DT' END,

CASE WHEN dropoff_datetime <= pickup_datetime THEN 'NEGATIVE_DURATION' END,

CASE WHEN trip_miles <= 0 THEN 'NON_POSITIVE_MILES' END,

CASE WHEN base_passenger_fare < 0 THEN 'NEGATIVE_FARE' END,

CASE WHEN trip_duration_minutes > 480 THEN 'TRIP_OVER_8H' END,

CASE WHEN trip_miles > 500 THEN 'TRIP_OVER_500MI' END

],

x -> x IS NOT NULL

),

'|'

) AS dq_flags_raw

FROM enriched

)

SELECT

trip_id,

hvfhs_license_num,

dispatching_base_num,

originating_base_num,

request_datetime,

on_scene_datetime,

pickup_datetime,

dropoff_datetime,

PULocationID,

DOLocationID,

trip_miles,

trip_time,

base_passenger_fare,

tolls,

bcf,

sales_tax,

congestion_surcharge,

airport_fee,

tips,

driver_pay,

cbd_congestion_fee,

is_shared_request,

is_shared_match,

is_access_a_ride,

is_wav_request,

is_wav_match,

trip_duration_minutes,

fare_per_mile,

total_amount,

(dq_flags_raw = '' OR dq_flags_raw IS NULL) AS dq_passed,

NULLIF(dq_flags_raw, '') AS dq_flags,

_bronze_ingestion_id,

_silver_timestamp

FROM dq_annotated;

For the Delta version, run the same SQL but change the target table to delta.silver.fhvhv_tripdata_clean_delta and the source to delta.bronze.fhvhv_tripdata_raw_delta. The transformation logic is identical — this is intentional, because in a real platform the transformation SQL is format-agnostic.

Step 3 — Silver Data Quality Report

After ingestion, run this report to understand the quality profile of the dataset. This is the type of query a data platform engineer would include in an automated pipeline health dashboard.

-- Silver DQ Summary

SELECT

dq_passed,

dq_flags,

COUNT(*) AS trip_count,

ROUND(AVG(trip_miles), 2) AS avg_miles,

ROUND(AVG(trip_duration_minutes), 2) AS avg_duration_min,

ROUND(AVG(base_passenger_fare), 2) AS avg_fare

FROM iceberg.silver.fhvhv_tripdata_clean

GROUP BY dq_passed, dq_flags

ORDER BY trip_count DESC;

-- What fraction of trips passed DQ?

SELECT

COUNT_IF(dq_passed) AS passed,

COUNT_IF(NOT dq_passed) AS failed,

COUNT(*) AS total,

ROUND(100.0 * COUNT_IF(dq_passed) / COUNT(*), 2) AS pass_rate_pct

FROM iceberg.silver.fhvhv_tripdata_clean;

Record your results here:

| Metric | Value |

|---|---|

| Total Silver rows | (fill in) |

| Rows passing DQ | (fill in) |

| DQ pass rate (%) | (fill in) |

| Most common DQ flag | (fill in) |

| Bronze rows (from Step 0) | (fill in) |

| Rows removed by dedup | (fill in) |

Step 4 — Create Gold Schema and Tables

Gold contains pre-aggregated business metrics. The schema is intentionally narrow and stable — it models what a finance or ops team would put in a dashboard. Partition on pickup_date (DATE, not TIMESTAMP) because Gold queries are almost always date-range lookups.

4a — Iceberg Gold Table

CREATE SCHEMA IF NOT EXISTS iceberg.gold;

DROP TABLE IF EXISTS iceberg.gold.fhvhv_daily_summary;

CREATE TABLE iceberg.gold.fhvhv_daily_summary (

-- grain: one row per (pickup_date, hvfhs_license_num)

pickup_date DATE,

hvfhs_license_num VARCHAR,

-- volume metrics

total_trips BIGINT,

total_shared_trips BIGINT,

total_wav_trips BIGINT,

-- distance & time metrics

total_miles DOUBLE,

avg_miles_per_trip DOUBLE,

total_trip_minutes DOUBLE,

avg_trip_minutes DOUBLE,

-- financial metrics

total_base_fare DOUBLE,

total_tolls DOUBLE,

total_tips DOUBLE,

total_driver_pay DOUBLE,

total_amount DOUBLE,

avg_fare_per_trip DOUBLE,

avg_fare_per_mile DOUBLE,

-- metadata

_gold_timestamp TIMESTAMP(6),

_silver_row_count BIGINT -- how many Silver rows fed this aggregate

)

WITH (

format = 'PARQUET',

location = 's3a://demo/gold/nyc_fhvhv_iceberg/',

partitioning = ARRAY['pickup_date']

);

4b — Delta Gold Table

CREATE SCHEMA IF NOT EXISTS delta.gold

WITH (location = 's3a://demo/gold/_schemas/gold/');

DROP TABLE IF EXISTS delta.gold.fhvhv_daily_summary_delta;

CREATE TABLE delta.gold.fhvhv_daily_summary_delta (

pickup_date DATE,

hvfhs_license_num VARCHAR,

total_trips BIGINT,

total_shared_trips BIGINT,

total_wav_trips BIGINT,

total_miles DOUBLE,

avg_miles_per_trip DOUBLE,

total_trip_minutes DOUBLE,

avg_trip_minutes DOUBLE,

total_base_fare DOUBLE,

total_tolls DOUBLE,

total_tips DOUBLE,

total_driver_pay DOUBLE,

total_amount DOUBLE,

avg_fare_per_trip DOUBLE,

avg_fare_per_mile DOUBLE,

_gold_timestamp TIMESTAMP(6),

_silver_row_count BIGINT

)

WITH (

location = 's3a://demo/gold/nyc_fhvhv_delta/'

);

Step 5 — Populate Gold (Silver → Gold Aggregation)

-- ============================================================

-- Silver → Gold: Iceberg version

-- Only process DQ-passed rows (dq_passed = TRUE).

-- ============================================================

INSERT INTO iceberg.gold.fhvhv_daily_summary

SELECT

CAST(pickup_datetime AS DATE) AS pickup_date,

hvfhs_license_num,

COUNT(*) AS total_trips,

COUNT_IF(is_shared_request) AS total_shared_trips,

COUNT_IF(is_wav_request) AS total_wav_trips,

ROUND(SUM(trip_miles), 4) AS total_miles,

ROUND(AVG(trip_miles), 4) AS avg_miles_per_trip,

ROUND(SUM(trip_duration_minutes), 2) AS total_trip_minutes,

ROUND(AVG(trip_duration_minutes), 2) AS avg_trip_minutes,

ROUND(SUM(base_passenger_fare), 2) AS total_base_fare,

ROUND(SUM(tolls), 2) AS total_tolls,

ROUND(SUM(tips), 2) AS total_tips,

ROUND(SUM(driver_pay), 2) AS total_driver_pay,

ROUND(SUM(total_amount), 2) AS total_amount,

ROUND(AVG(base_passenger_fare), 2) AS avg_fare_per_trip,

ROUND(AVG(fare_per_mile), 4) AS avg_fare_per_mile,

current_timestamp AS _gold_timestamp,

COUNT(*) AS _silver_row_count

FROM iceberg.silver.fhvhv_tripdata_clean

WHERE dq_passed = TRUE -- Gold only contains validated data

GROUP BY

CAST(pickup_datetime AS DATE),

hvfhs_license_num;

Apply the same INSERT to the Delta Gold table, sourcing from delta.silver.fhvhv_tripdata_clean_delta.

Step 6 — Gold Validation Queries

These queries prove your Gold table is correct and analytically useful. Run them and record the outputs.

-- 6a: Provider performance summary

SELECT

hvfhs_license_num,

SUM(total_trips) AS trips,

ROUND(SUM(total_miles), 0) AS total_miles,

ROUND(SUM(total_amount), 2) AS gross_revenue,

ROUND(AVG(avg_fare_per_trip), 2) AS avg_fare,

ROUND(AVG(avg_trip_minutes), 1) AS avg_duration_min,

ROUND(AVG(avg_fare_per_mile), 3) AS avg_fare_per_mile

FROM iceberg.gold.fhvhv_daily_summary

GROUP BY hvfhs_license_num

ORDER BY trips DESC;

-- 6b: Daily trend (useful for spotting data gaps)

SELECT

pickup_date,

SUM(total_trips) AS daily_trips,

ROUND(SUM(total_amount), 2) AS daily_revenue,

ROUND(AVG(avg_trip_minutes), 1) AS avg_duration

FROM iceberg.gold.fhvhv_daily_summary

GROUP BY pickup_date

ORDER BY pickup_date;

-- 6c: Cross-format consistency check (Iceberg vs Delta Gold must agree)

SELECT

i.pickup_date,

i.hvfhs_license_num,

i.total_trips AS iceberg_trips,

d.total_trips AS delta_trips,

i.total_trips - d.total_trips AS diff

FROM iceberg.gold.fhvhv_daily_summary i

JOIN delta.gold.fhvhv_daily_summary_delta d

ON i.pickup_date = d.pickup_date

AND i.hvfhs_license_num = d.hvfhs_license_num

WHERE i.total_trips <> d.total_trips -- only show discrepancies

ORDER BY ABS(i.total_trips - d.total_trips) DESC;

Expected for 6c: Zero rows. If you see discrepancies, trace back to whether the Bronze source tables were identical and whether both Silver transforms ran against the same input.

Step 7 — Table Format Introspection

This step reinforces your understanding of what Iceberg and Delta actually write to storage.

-- Iceberg: inspect snapshots created by each INSERT

SELECT

committed_at,

snapshot_id,

operation,

summary['added-records'] AS rows_added,

summary['total-records'] AS total_records,

summary['added-files-size'] AS bytes_added

FROM iceberg.silver."fhvhv_tripdata_clean$snapshots"

ORDER BY committed_at;

-- Iceberg: inspect physical files per partition

SELECT

file_path,

file_format,

partition,

record_count,

file_size_in_bytes

FROM iceberg.silver."fhvhv_tripdata_clean$files"

ORDER BY partition;

-- Delta: inspect transaction history

SELECT

version,

timestamp,

operation,

operationParameters

FROM delta.silver."fhvhv_tripdata_clean_delta$history"

ORDER BY version;

Discussion questions to answer in your notes:

- How many Parquet files did Iceberg create in Silver, and why that number?

- What is the snapshot ID of the INSERT operation in Iceberg Silver? What would happen if you ran the INSERT again without truncating?

- In the Delta history, what

operationtype is recorded for the INSERT? How does this differ from aMERGE?

Step 8 — (Optional Advanced) Incremental Load Simulation

This step simulates what happens in a real pipeline when new data arrives the next day.

-- Simulate a second batch: re-ingest a small subset of rows

-- with slightly different _ingest_timestamp to test deduplication.

INSERT INTO iceberg.bronze.fhvhv_tripdata_raw

SELECT

hvfhs_license_num,

dispatching_base_num,

originating_base_num,

request_datetime,

on_scene_datetime,

pickup_datetime,

dropoff_datetime,

PULocationID,

DOLocationID,

trip_miles,

trip_time,

base_passenger_fare,

tolls,

bcf,

sales_tax,

congestion_surcharge,

airport_fee,

tips,

driver_pay,

cbd_congestion_fee,

shared_request_flag,

shared_match_flag,

access_a_ride_flag,

wav_request_flag,

wav_match_flag,

current_timestamp AS _ingest_timestamp, -- new timestamp = "second run"

's3a://demo/landing/nyc_taxi/2026/02/26/' AS _source_prefix,

to_hex(md5(to_utf8(

hvfhs_license_num ||

cast(pickup_datetime AS VARCHAR) ||

cast(dropoff_datetime AS VARCHAR) ||

cast(PULocationID AS VARCHAR) ||

cast(DOLocationID AS VARCHAR)

))) AS _ingestion_id

FROM iceberg.bronze.fhvhv_tripdata_raw

WHERE CAST(pickup_datetime AS DATE) = DATE '2026-01-15' -- pick one day as the "new batch"

LIMIT 5000;

-- Now re-run the Silver INSERT (Step 2).

-- The Silver CTE deduplication should absorb the duplicates cleanly.

-- Verify: Silver row count should NOT increase by 5000.

SELECT COUNT(*) FROM iceberg.silver.fhvhv_tripdata_clean;

5. Experiment Results Template

Fill in after completing all steps.

| Metric | Iceberg | Delta |

|---|---|---|

| Bronze row count | ||

| Silver row count (after dedup) | ||

| Rows removed by deduplication | ||

| DQ pass rate (%) | ||

| Gold rows (distinct date × provider) | ||

| Silver INSERT duration (approx.) | ||

| Gold INSERT duration (approx.) | ||

| Number of Parquet files in Silver | ||

| Storage size of Silver layer (bytes) | ||

| Cross-format Gold discrepancies |

6. Key Concepts Reinforced

Why annotate DQ failures rather than filter them at Silver? Because Silver is the audit layer. Finance and compliance teams need to understand what was rejected and why. Filtering at Silver would destroy that audit trail. Gold is where you enforce the hard quality gate (WHERE dq_passed = TRUE).

Why use a CTE chain instead of nested subqueries? CTE chains map directly to dbt models. Each CTE (deduped, unique_trips, enriched, dq_annotated) becomes a potential intermediate model. Writing it this way now costs nothing and makes the future migration to dbt trivial.

Why does Gold use DATE instead of TIMESTAMP for partitioning? Because every Gold query is a date range scan — "show me the last 30 days of revenue". Partitioning on DATE eliminates entire partitions from scan with a simple WHERE pickup_date BETWEEN .... Timestamp partitioning at Gold would fragment the partition layout unnecessarily.

What is the difference between Iceberg snapshots and Delta transaction log? Iceberg writes immutable snapshot metadata files that point to data files. Delta writes a sequential JSON transaction log (_delta_log/). Both enable time travel, but Iceberg's snapshot model is better suited for concurrent multi-engine reads (Trino + Spark simultaneously), while Delta's log compaction (OPTIMIZE + VACUUM) is more mature in the Databricks ecosystem.

7. Next Experiment Preview — Experiment 03

Experiment 03: Incremental Merge & Time Travel

You will replace the naive INSERT-based Silver population with a proper MERGE INTO (upsert) statement, implement time travel queries to inspect historical versions of your Silver table, and measure the performance difference between a full-reload vs. incremental merge strategy on the same dataset.

Document version: 2026-02 | Platform: Trino 428 + Iceberg 1.4 + Delta 3.x + MinIO RELEASE.2024